The AI Revolution Runs on Five Building Blocks

Underneath the chaos of AI news, five new fundamental capabilities explain everything being built right now and everything that's coming.

If you feel like you can’t keep up with AI news, you’re not alone. Every day brings another model release, another startup announcement, another breathless headline. The firehose is relentless.

Here’s the thing: you’re not supposed to track all of it. You’re supposed to understand what’s actually new.

Every major technology cycle has this phase where noise drowns out signal. We saw it with PCs in the 1980s, the internet in the 1990s, smartphones in the 2000s. Underneath the noise, something genuinely different was happening each time. Not faster or cheaper. Actually new.

That’s what’s happening with LLMs. The way to think clearly about it is simple: focus on the building blocks.

How to Know Something Is Actually New

Most “new” technologies are just improvements on what came before. Faster processors. Cheaper storage. More accessible cloud.

Real paradigm shifts give us capabilities that literally didn’t exist before. The IBM PC launched in August 1981 and moved computing from corporate mainframes to individual desks. New capability: personal access to computing. The web arrived in the 1990s and connected knowledge that had been isolated in libraries and corporate databases. New capability: universal access to information. The iPhone launched June 29, 2007, and computing left the desk. New capability: computing anywhere, anytime.

Each time, we got new Lego blocks. Fundamental capabilities that didn’t exist before.

Counter-example: Blockchain. For all the hype, it was essentially a database with quirks (immutability, non-repudiation). We’d had relational databases for 60 years. Most people don’t actually need those quirks. Your bank can reverse fraudulent transactions; Bitcoin cannot. It was a solution in search of a problem. No genuinely new building blocks emerged.

LLMs are different. Here’s why.

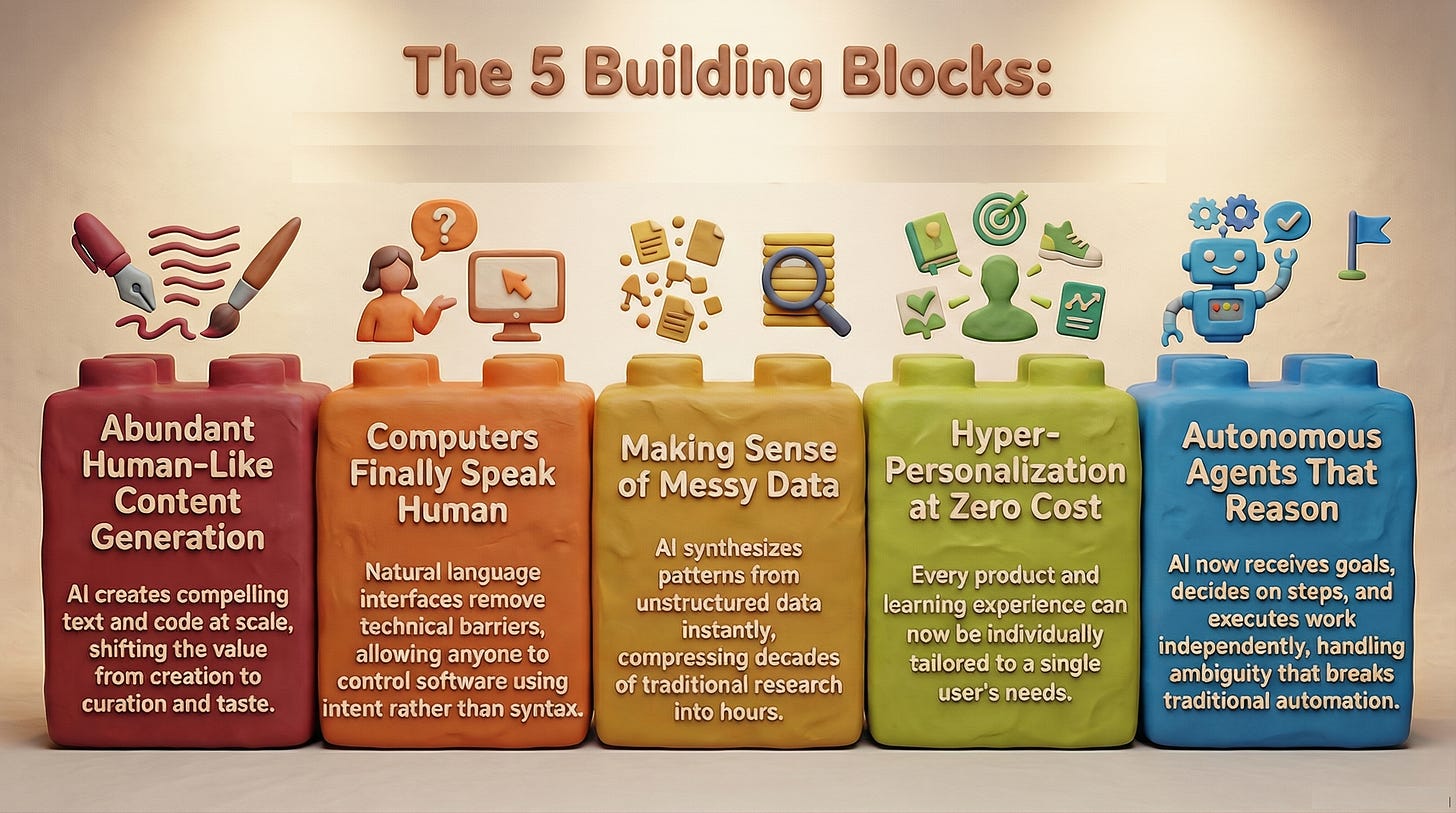

The Five New Capabilities

When ChatGPT launched on November 30, 2022, it reached 100 million users in two months. That’s faster than any consumer application in recorded history. TikTok took nine months to get there. Instagram took two and a half years. UBS analysts, after two decades tracking consumer internet, wrote that they couldn’t recall “a faster ramp in a consumer internet app.” But the adoption curve isn’t the real story. The real story is what LLMs can do that nothing before could.

1. Content Generation: Creating Things That Sound Human

LLMs generate text, code, images, and music that passes for human-created. Not just coherent. Compelling. We’ve casually stepped over the Turing barrier.

Before, content required human creativity, time, and expertise. Now it can be generated at scale, on demand, in any style. GitHub reports that 46% of code written by active developers is now AI-generated, up from 27% when Copilot launched in 2022. Developers using Copilot complete tasks 55% faster. That’s not automation of coding. That’s abundance of code itself.

When content generation becomes abundant, curation becomes the scarce skill. Knowing what content should exist. Having taste. Understanding context. The bottleneck shifts from creation to selection.

Example: A marketing team that once spent weeks on campaign copy can now generate hundreds of variants in minutes. The bottleneck shifts from creation to selection—choosing what resonates, what fits the brand, what works.

2. Natural Language Understanding: Computers Finally Speak Human

LLMs understand imperfect human input: stutters, fragments, ambiguity, context. They respond the way a thoughtful colleague would. The interface between humans and computers has effectively disappeared.

Before, humans learned computer languages. We mastered command lines, filled out rigid forms, clicked through predetermined paths. Now computers adapt to how humans naturally communicate. This is larger than most people realize. It’s why vibe-coding will expand the global developer count from roughly 30 million today to well over 100 million within a decade.

Instead of learning SQL to query a database, you ask: “Show me customers who spent more than $1,000 last quarter but haven’t purchased this quarter.” No syntax cramming required. Intent is enough.

When interfaces become conversational, software becomes accessible to everyone. The distinction between “technical” and “non-technical” workers starts to blur.

3. Data Synthesis: Understand Fuzzy Data, Make Unseen Connections

LLMs make sense of incomplete, unstructured data. They find patterns without pristine inputs. They can predict outcomes before we understand why the prediction works.

The traditional scientific method required theory, then experimentation, then validation. That process can take decades. Now we can feed in unpristine data, get results, and understand the theory later. Or never.

AlphaFold 2 demonstrated this at scale. In November 2020, it solved the 50-year protein folding problem at the CASP14 competition, predicting protein structures with a median accuracy score of 92.4 out of 100. It did so without fully explaining the underlying chemistry. We can now design proteins for drugs, materials, and enzymes without waiting for theory to catch up with practice. R&D cycles compress by orders of magnitude.

4. Personalization: Everyone Gets Their Own Version

Hyper-adaptation to individual preferences at massive scale. Not “recommended for you.” Genuinely customized experiences that previously required armies of humans or millions of lines of code.

Personalization was expensive. It required segmentation, manual configuration, human intervention. True 1:1 experiences were economically unviable for all but the largest companies. Now the cost approaches zero.

When personalization is free, one-size-fits-all becomes a competitive disadvantage. Every product can be individually tailored. The clearest example: educational content that adapts to your learning style in real time. Not “here’s the video for visual learners.” Content that notices you’re struggling with a concept and shifts its approach, pacing, and examples until it clicks.

5. Autonomy: Go Think, Decide, and Report Back

This is the one that changes the nature of work most directly.

Autonomous AI agents don’t just respond to prompts. They receive a goal, break it into steps, use tools, make decisions, and complete the work. A human sets the objective and reviews the output. Everything in between is the agent’s job.

Before, automation required explicit programming for every possible state. Rule-based systems broke the moment conditions changed. Agents reason about and around novel situations, adapt mid-task, and escalate only when genuinely stuck. This is new. Automation that handles ambiguity is categorically different from automation that handles only the expected.

The results are early but striking. Ramp, the corporate spend management company, deployed a finance agent in July 2025 that reads company policy documents, audits expenses, flags violations, and generates reimbursement approvals without manual review. OI Infusion Services deployed a healthcare agent for insurance prior authorization and cut approval times from 30 days to 3. That’s not efficiency improvement. That’s a different category of operation.

When agents handle execution, human judgment shifts to goal-setting and guardrails. What becomes scarce is knowing what to build, not building it.

Why This Is Infrastructure, Not Just Tools

Infrastructure has four characteristics: others build on it, it enables previously impossible capabilities, it becomes foundational to how things work, and it compounds in value as adoption grows.

Electricity wasn’t just better lighting. It let factories reorganize, cities function differently, entire industries emerge. The same pattern holds here. These five building blocks aren’t making existing work faster. They’re enabling new categories of products, services, and business models.

And they compound. Content generation plus personalization equals individualized education at scale. Data synthesis plus agents equals drug discovery that runs overnight. Natural language plus code generation equals anyone can build software. All five together create products that adapt, learn, and evolve.

How to Orient Yourself

Stop trying to keep up with absolutely everything. The news cycle is noise.

Focus on the five building blocks. When a new announcement lands, ask which capability it actually uses. Most “new” things are combinations of these five.

Understand what becomes valuable. When capabilities become abundant, different things become scarce: judgment over execution, taste over production, empathy, ex nihilo creativity, ethics, taste - human elements that AI cannot replicate.

Think in workflows. Don’t ask “Should we use AI?” Ask “Which of the five building blocks applies to which workflows?” The question isn’t whether to adopt. The question is where and how to deploy.

Experiment aggressively. There is a lot of learn. Generals have become Privates overnight. There is no gain in pride. Humility and curiosity are critical values right now.

Once you see the pattern, the noise becomes signal.

Which of these five capabilities could transform your work? Pick one. Experiment with it this week. Then tell us what you learn.

This article is part of ProductMind’s ongoing exploration of AI-era product development. Subscribe for frameworks that help make sense of the transformation.

*LLMs still benefit from nice, clean data. Everything does. But they do not need it. System prompts can take care of a lot of regex and static code.

🔍 Want to dive deeper?

Check out our book BUILDING ROCKETSHIPS 🚀 and continue this and other conversations in our 💬 ProductMind Slack community and our LinkedIn community.

🎧 Prefer to listen? Check our podcast below ↓

🎥 YouTube → Click Here 🎵 Spotify → Click Here 🎙️ Apple Podcasts →Click Here